Home Stretch | Teaching robots to see better

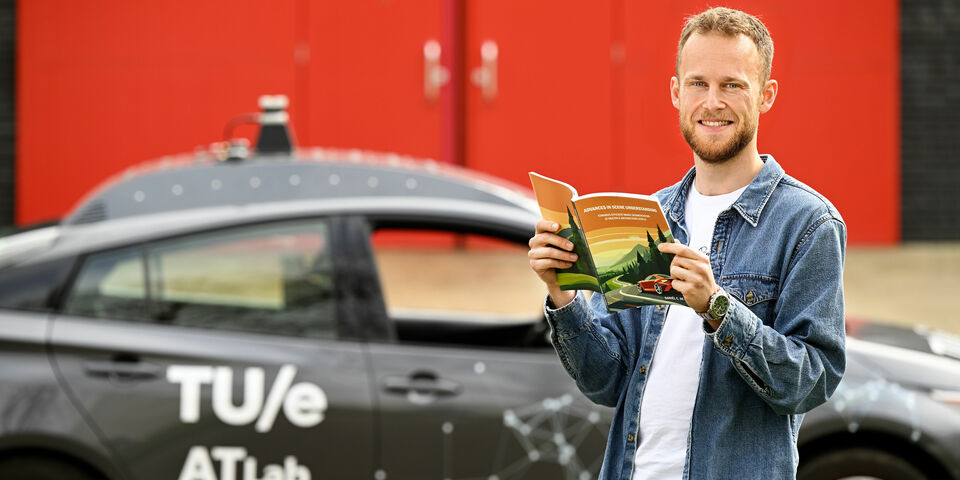

In order to deploy mobile robots, self-driving cars, or drones into the real world, they need to be able to observe and understand the world around them, just like humans do. PhD candidate at TU/e Daan de Geus developed algorithms for automatic image recognition that are faster and more accurate than existing models. Last Wednesday, he obtained his PhD (with honors) at the Department of Electrical Engineering.

De Geus thumbs through his dissertation and shows us a photo of a street, featuring different objects such as people, vehicles, traffic posts, and traffic lights (see image below). “Mobile robots and self-driving cars need to know what’s happening around them,” he says. Among other things, they need to be able to recognize and localize objects, so they can take these into account. “This allows them to move around or toward a certain object, and maybe pick it up to carry out a task.”

To make robots aware of their environment, different computer vision techniques are used. It concerns an area of research that revolves around automatic extraction of relevant information from camera footage. “Simply put, we try to make models that can distill as much information from a photo as possible,” the PhD candidate explains. “The goal of computer vision is to make a system that mimics our own visual system, allowing computers to see in the same way humans do and, as a result, interact properly with the world around them.”

His research focuses on improving image recognition techniques in the area of scene understanding. This is a small, but crucial part of computer vision. “Its goal is to recognize different objects and regions in an image and to give them a semantic label, such as ‘streetlight’, ‘road’, ‘car’, or ‘person’,” he explains. In other words, the objects are given a significance that is clear to humans.

Accuracy and efficiency

For automatic image analyses, neural networks are used – systems that learn to carry out a certain task by receiving training involving a large amount of data. By training these neural networks in a focused manner, you end up with different models that specialize in specific tasks, such as identifying all cars in an image. In the first part of his dissertation, De Geus looked at how you can improve the accuracy and efficiency of those models. “Improving one aspect is often at the expense of another. To get more accurate results, existing methods tend to lose some of their efficiency, and vice versa,” he explains. “Which makes sense, because greater accuracy often demands more computing power, which directly impacts efficiency.” The big question, therefore, is: how can you improve these aspects without having to compromise?

He came up with several solutions to this conundrum. “Efficiency is important for two reasons. You want the algorithm to use as little energy as possible and to make a prediction as quickly as possible,” he says. Speed is of the essence in self-driving vehicles, as cars must be able to respond to situations that arise in a timely manner. “If the calculation takes a second, it may already be too late to take action.”

Using a process known as model unification, he combined two models to create a more efficient one. “Some tasks focus on the objects in the foreground, such as cars and people; others on ‘background regions’ such as vegetation and the sky. These tasks are performed by two different neural network modules, as each module specializes in a different task,” he explains. “Using two network modules isn’t very efficient, because you have to run them parallel to each other.” He found out that you can supply extra information to the network module processing the background information, allowing it to also identify foreground objects. “As a result, the module for foreground objects is no longer necessary, which greatly enhances efficiency. This makes this model twice as fast as its precursors, while its accuracy is comparable.”

Another way of improving efficiency is based on the observation that many regions in an image are very similar. For instance, the sky generally takes up a large part of any picture you take outside. “In spite of the fact that a lot of information is similar, neural networks process each image region separately, which is very inefficient,” says De Geus. The entire image is divided up into patches consisting of pixels (see image below). Normally, these would be labeled and assessed individually, but the PhD candidate developed a method for clustering patches with comparable information, reducing the total number of patches and, by extension, requiring less computing power. “This allows us to improve speed by as much as 110%, without taking away from the accuracy.”

Incidentally, this method for grouping similar image regions is widely applicable to many different models and a great many applications other than self-driving cars and mobile robots. For example, you could use these algorithms to segment medical images. “Pretty much in any situation where you need to automatically analyze images.”

Multiple abstraction levels

In the second part of his dissertation, he focused on what he calls multiple abstraction levels. “Existing algorithms either focus on entire objects, such as cars, or on their components, such as car tires or the license plate,” he explains. His goals was to develop an algorithm that can simultaneously understand an image at multiple abstraction levels. “This will allow a mobile robot to observe both the car and its components, as well as other objects and the background, all at the same time, which gives it a comprehensive picture of the surroundings,” says De Geus.

You can have both algorithms carry out their work separately and merge the results afterwards, but this is cumbersome. And it can also lead to conflicts between the two calculations. Instead, he developed a new algorithm that can identify objects and components all at once. “That’s not only more accurate, but also more efficient.” What’s more, this improved model is widely applicable and has many advantages. “It means, for instance, that a robot isn’t just able to recognize a door, but also the door handle, and to understand that the latter is part of the door. Which, in turn, allows it to open the door,” he says to illustrate.

To be able to use self-driving cares or mobile robots in practice, there’s a lot left to do still. “Image recognition is just a tiny part of the puzzle,” he admits. And there are plenty of remaining challenges in the area. For one thing, models could be developed that can recognize more objects at once and that operate even faster and more accurately. The generalization of the model could also be improved, so it also produces good results when the data are different from the data sets with which the network was trained, allowing it to perform better in real-world scenarios. But at least his dissertation constitutes a step forward in making the system more capable, accurate and efficient.

PhD in the picture

What is that on the cover of your dissertation?

“A self-driving car, because that’s one of the most important potential applications of my research. They’re often depicted in the middle of a busy street, but I thought it would be fun to put it in a beautiful landscape so you can enjoy the scenery. It also has the symbolism of being on the road, looking toward the dot on the horizon. We’re not there yet, but I hope my research is another step in the right direction. And the different colors refer to the image regions and object components identified by the image recognition algorithms.”

You’re at a birthday party. How do you explain your research in one sentence?

“I develop image recognition algorithms that can help mobile robots to better observe and understand their surroundings.”

How do you blow off steam outside of your research?

“I love sports, both playing them – padel, racket ball, bootcamp – and watching them. I have a season ticket for Feyenoord soccer club, for instance.”

How does your research contribute to society?

“I hope this is a piece of the puzzle toward ultimately having mobile robots that can help people, for instance by improving traffic safety using self-driving cars or by delivering urgent goods to remote areas using mobile drones. Other examples include healthcare robots and robots than can take heavy and dangerous work out of human hands, such as construction robots.”

What is your next step?

“I’m planning to stay in academia. First, I’ll be doing a research visit to a computer vision lab of RWTH Aachen University starting in the summer. I hope to be able to stay in Eindhoven after that, to continue to grow and do research. I really like keeping up with recent developments and trying out things that nobody’s ever done before.”

Discussion